Homepage | People | Publications | Software | Opportunities

Harness is a collaborative computer science research effort of Oak Ridge National Laboratory (ORNL), the University of Tennessee Knoxville, and Emory University in advanced software solutions for parallel and distributed computing systems with an emphasis on scientific high performance computing (HPC). While past Harness work focused on heterogeneous distributed virtual machine and fault tolerant runtime environments, ongoing research targets optimized scientific application development and deployment processes. The following two paragraphs describe ongoing and past work in more detail.

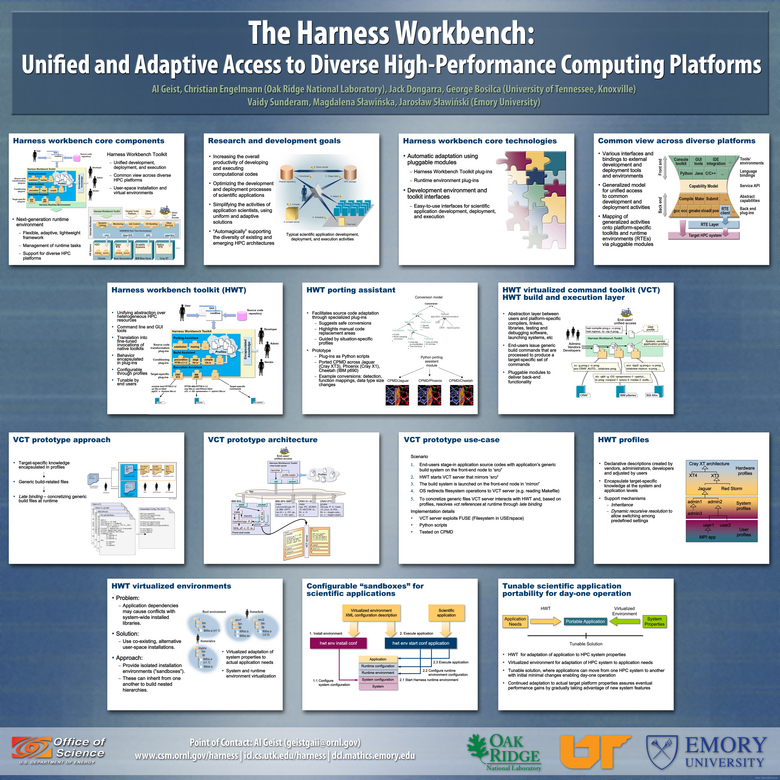

Harness Workbench: Unified and Adaptive Access to Diverse HPC Platforms The goal of this project is to enhance the overall productivity of applications science on diverse high performance computing platforms by conducting research in two innovative software environments. The first is the Harness Workbench Toolkit (HWT) for application building and execution that provides a common view across diverse HPC systems. The HWT consists of a software backplane architecture that presents a uniform but extensible interface for preparatory and pre-execution stages of application execution, which interfaces to instance-specific software via customizable plug-in modules. The second is the next generation Harness runtime environment (HRTE) that similarly provides a flexible, adaptive framework for plugging in modules optimized for a specific HPC system and allows dynamic interfacing to a variety of user environments. Both these environments will employ platform specific pluggable modules disseminating target-specific knowledge and expertise immediately to all end-users who can continue to interface to a familiar environment.

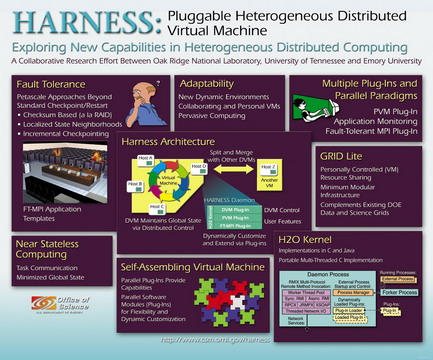

Harness: Heterogeneous Distributed Computing The heterogeneous adaptable reconfigurable networked systems (Harness) research project focused on the design and development of a pluggable lightweight heterogeneous Distributed Virtual Machine (DVM) environment, where clusters of PCs, workstations, and ``big iron'' supercomputers can be aggregated to form one giant DVM (in the spirit of its widely-used predecessor, Parallel Virtual Machine (PVM)). As part of the Harness project, a variety of experiments and system prototypes were developed to explore lightweight pluggable frameworks, adaptive reconfigurable runtime environments, assembly of scientific applications from software modules, parallel plug-in paradigms, highly available DVMs, fault-tolerant message passing (FT-MPI), fine-grain security mechanisms, and heterogeneous reconfigurable communication frameworks. Three different Harness system prototypes were developed, two C variants and one Java-based alternative, each concentrating on different research issues. The technology developed within the Harness project influenced many other research and development efforts, such as Open MPI and MOLAR

This research is sponsored by the Office of Advanced Scientific Computing Research; Office of Science; U.S. Department of Energy. The work is performed jointly at Oak Ridge National Laboratory, which is managed by UT-Battelle, LLC under Contract No. De-AC05-00OR22725, the University of Tennessee Knoxville, and Emory University. Please contact engelmannc@ornl.gov with questions or comments regarding this page.

This research is sponsored by the Office of Advanced Scientific Computing Research; Office of Science; U.S. Department of Energy. The work is performed jointly at Oak Ridge National Laboratory, which is managed by UT-Battelle, LLC under Contract No. De-AC05-00OR22725, the University of Tennessee Knoxville, and Emory University. Please contact engelmannc@ornl.gov with questions or comments regarding this page.